Continuous Improvement: For Good Measure

Heath Ledger, Mark Addy, Paul Bethany, Laura Fraser, and Alan Tudyk in the 2001 Columbia Pictures Film, A Knight’s Tale

“You have been weighed. You have been measured. And you have been found wanting.”

This line from the 2001 film A Knight’s Tale, brings back how I always felt about performance appraisals. Research shows I am not alone; no one really likes to be measured. Measures, metrics and measurement, especially when they concern our own performance, are not popular topics.

This is unfortunate for continuous improvement initiatives because metrics and measurement are the foundation of improvement. For example, I simplify continuous improvement (CI) as:

Measure where you are – Improve – Measure where you got to – Repeat

So without measurement there really couldn’t be any improvement. How would you know you improved?

CI involves:

· Metrics: measures, metrics, indicators, key performance indicators (KPIs) either in numbers (continuous data) or attributes (discrete data like pass/fail, on/off, yes/no, etc.)

· Measurement: the process of how we measure to ensure precision and accuracy every time.

Issues with Metrics

There are some issues with the numbers themselves, too many metrics, conflicting metrics, not the right metrics and leading vs. lagging indicators to name a few.

· Too Many Metrics – People have measured performance since pre-history. The phrase Key Performance Indicator (KPI) entered the lexicon with the work of Art Schneiderman at Analog Devices in 1987 and Robert Kaplan and David Norton’s work on the Balanced Scorecard in the 1990s. The idea was that a few critical metrics 1 or 2 each in 4 domains (Finance, Marketing, Operations, and Human Resources) determined the entire performance of a corporation. Alas, the word “key” in KPIs has been lost in many organizations. Now, in the era of Big Data, it seems that because we can measure and analyze something we should. In CI initiatives, we often create new metrics and that can overwhelm an already over-measured workforce. Use what you have and rationalize metrics wherever possible.

- The Wrong Metrics – In CI we often find that, despite everything we are measuring, we aren’t measuring what matters. For instance we may collect data on total sales by region and not by customers that buy across regions, leading us to misjudge who our top customers are. Or we may track inventory turn, but fail to count work in process in inventory leading us to over-order from suppliers. In addition to not measuring critical metrics we make two fallacious assumptions:

- You can’t measure that! This is usually said about qualitative data – for example data strongly influenced by human emotion. Customers who are satisfied will continue to buy from you, but customers who are surprised and delighted will tell their friends and help you grow your business. How do you measure delight? In 2003, Fredrick Reichheld, created the Net Promoter Score based upon the answer to the question “how likely are you to recommend?” This is a “proxy measure” for customer delight. Proxy measures are often based upon correlation analysis, but can be based upon simple observation. “When this happens, I also notice that this happens. I have seen proxy measures for risk reduction based on the percentage of procedures signed off in a log; I have seen proxy measures for continuous improvement deployment effectiveness based around the time between the first CI project someone works on and the second and third. Proxy measures take some thinking, but then so does improvement.

- Results are all that matters! This is often said by executives whose bonuses are tied to “the bottom line.” We should definitely track and monitor results, but these are lagging metrics. “Accounting numbers are always looking in the rear-view mirror.” “Last month’s sales are a fact; this month’s calls per week and close ratio you can do something about.” Or in the words of my Results-Alliance CI colleague, Ric Taylor, “Monitor outputs; control inputs.” You have to get upstream or leading metrics to control a process, e.g. material supplier cost drives overall cost; e.g. share of wallet drives customer profitability. Ask what inputs drive the output. Then test the impact and set up ways to control the variation.

- Conflicting Metrics – If you want to uncover conflicting metrics, ask your people, the process users, “Are there any areas where we ask you to do one thing, but reward you for doing something else?” At one call center I worked with, a CSR’s answer was shocking. “I’m told we want to increase customer satisfaction, but I’m measured and paid bonus based upon average call handle time. They’ve even put the current call clock on my screen along with the week’s average handle time. So if I get an unhappy customer who’s going to take a while, I disconnect while I’m talking. Then I pick up the next call in the queue.” Identify conflict and resolve it.

Issues with the Measurement Process

If we are tracking improvement, we want to know we can measure precisely and accurately. There may be a natural variation to the results achieved at any stage of the process. We can understand those by understanding the mean and standard deviation of those numbers. Our concern with the measurement process is to understand how much of the variation in our results is due to variation in the process, equipment and people, and of measuring it.

Imagine that I want to lose weight. First, I need a measurement device, a bathroom scale. I can choose a scale according to many criteria, but I want a scale that is precise. I want a scale that can differentiate weight within a half a pound or so; a weigh station truck scale wouldn’t likely meet the test and, despite the fact that my scale is digital and records in denominations .2 pounds, movements of about a half pound are what concern me.

Next, I need a consistent (precise) measurement process. I’ve chosen to weigh myself every morning before getting dressed and having breakfast, with the scale in the same place, and on a level surface. Even though I’ve learned that if I stand on my toes towards the front of the scale my weight is less, I stand flat-footed in the center. I need to be assured that I can repeat the same process precisely so I can depend on the precision of the scale, so I am comparing, apples to apples, or in this case relatively precise pounds. Repeatability is one measure of precision. Reproducibility is another.

Repeatability determines whether the same person can use the same measurement device and get the same result, or there is variation in the measurement device. Reproducibility of a measurement process determines whether different people can use the process and the equipment to get the same result, or there is variation caused by different people measuring slightly differently. I would need to have a clear and reproducible measurement process if I were running a weight loss competition, for example.

I also want both the scale to be accurate that is the pounds measured should bear some consistent relationship to an external standard. In my case, the external standard is my doctor’s scale. That scale consistently weighs 3 pounds heavier than mine, but then I’m seldom on it completely undressed or before breakfast.

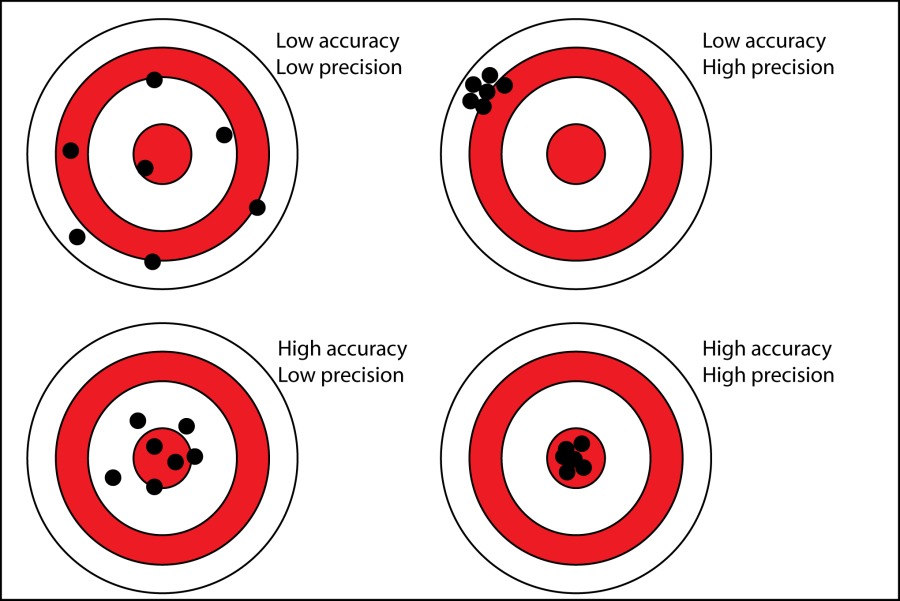

A random pattern across the entire target is neither precise nor accurate

A tight cluster of bullet holes in the outer ring is precise, but not accurate.

A widely spaced grouping, around the bullseye is accurate, but not precise.

A tight group in the center is both precise and accurate.

Precision is the absence of variation in the results. Accuracy is the closeness to the intended true value. (I accepted my doctor’s scale as truth in my example. In real life, this might be set by the customer.)

Metrics, Measurement and Improvement

Improving a business process may be reducing the process cycle time or reducing scrap materials, eliminating rework or increasing the process yield (increased outputs for the same inputs). As CI practitioners we often reduce waste and/or reduce variation in the process. If you are a CI practitioner you know that a Measurement Systems Analysis (MSA) analyzes repeatability and reproducibility to determine how much of much of the variation in your process is due to variation in the measurement. If you can’t trust the scale or you are using it differently each time, how would you know you actually lost weight?

So as much as we don’t like to be “weighed… measured, and… found wanting,” we must endure this indignity in order to improve.

If you enjoyed this please join the conversation . I welcome any comments and shares. Please visit some of my other posts.

About Alan Culler:

I'm a change guy..I have helped leaders make strategic change for over 37 years. I work with Results-Alliance a network of independent consultants and small firms dedicated to teaching clients what we know about making change sustainably.

If you'd like more information email me at alan@results-alliance.com

"""

Articles from Alan Culler

View blog

“The wise man doesn’t give the right answers, he poses the right questions.” · Claude Levi-Strauss ...

Janus, the two-faced god of gateways, beginnings and transitions was often found above the Roman vil ...

The late, great Jerry Lewis as a young comedian · “You are only young once, Alan, but you can be imm ...

You may be interested in these jobs

-

Senior Manager, Partnership Activation

Found in: beBee S2 US - 2 weeks ago

teamworkonline Lancaster, United StatesCOMPANY INFORMATION: · USL Antelope Valley is one of four expansion teams that will begin play in March 2025 in USL League One, bringing the league to 16 teams. USL Championship and USL League One make the USL the largest professional men's soccer league in the United States. Th ...

-

Sales Consultant

Found in: Jooble US O C2 - 1 day ago

KELLER Scottdale, PA, United States Full timeKELLER is looking for a leader to add to our organization who has passion and purpose. We have been in business for 120 years and lead the way in the industry. The role will consist of learning our process to service existing 2,000 business clients while developing relationships ...

-

Stock Plan Analyst

Found in: Talent US C2 - 6 days ago

SoFi Salt Lake City, United StatesThe role · : SoFi is searching for a stock plan analyst to support the day-to-day long-term incentive plan's requirements in a fast-growing environment. This role will be a key contributor in supporting the daily operations of the Equity Team. The right person will thrive with ...

Comments